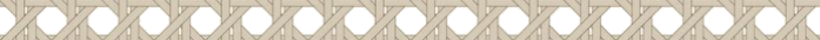

Control of your codebase is an illusion

I need to say something that might make a few engineering leaders uncomfortable: you don’t have control of your codebase. You never did.

I’ve been leading engineering organizations for over 25 years. In that time, I’ve managed teams of five and teams of hundreds. I’ve shipped software for telecom, healthcare, and aviation/aerospace. And across all of it, I’ve never once looked at a production codebase and said with full confidence, “We know exactly what’s in here and it all works correctly.”

We have shipping software, and on that basis, yes, you could argue we have control. But then production breaks. A bug surfaces in a code path nobody remembers writing. A security audit reveals a vulnerability that’s been there for three years. A customer hits an edge case that never appeared in any test plan. And every time, the postmortem reveals the same root causes: the code review was rushed, the requirements were ambiguous, the test coverage had gaps, the team was under pressure.

Sound familiar? Hold that thought.

The promise and the misunderstanding

Now AI enters the picture. And the reaction from engineering leaders and engineers alike tends to fall into one of two camps.

Camp one: AI generates perfect code most of the time. Just let it build things and review the output quickly. We’ll move faster and we can ship with a leaner team.

Camp two: AI-generated code can’t be trusted. We don’t know what’s in there. The code could have subtle bugs, security holes, or logic that doesn’t match our requirements. We need to keep writing everything by hand.

Both camps are wrong, and they’re wrong for the same reason. They both assume that human-written code was under control in the first place.

Here’s what several recent industry reports suggest. Stack Overflow’s 2025 developer survey found that 84% of developers are using or planning to use AI tools, but only a small minority highly trust the accuracy of the output. That gap matters. Developers are using the tools because they increase output, but they still do not feel safe treating the output as inherently reliable.

Other recent vendor research points in the same direction. CodeRabbit reported that AI-generated pull requests in its analysis contained more issues overall than human-generated pull requests. Meanwhile, Qodo’s 2025 State of AI Code Quality report points to a more nuanced reality: teams are seeing meaningful productivity gains from AI, but trust, review quality, and context handling remain unresolved.

That should not surprise us. We were already shipping bugs constantly with human-written code. We were just shipping them slower.

The fix you’ve always needed

Here’s where it gets interesting. When I ask engineering teams what would fix their production quality issues, the answer is always the same. More thorough code reviews. Better defined requirements and acceptance criteria. Higher test coverage. More static analysis. More security scanning. Better traceability from requirements to deployment.

That list is not new. Teams have been asking for those things for decades. The reason they don’t get them is simple: they take time, and time is the one thing engineering teams never have enough of.

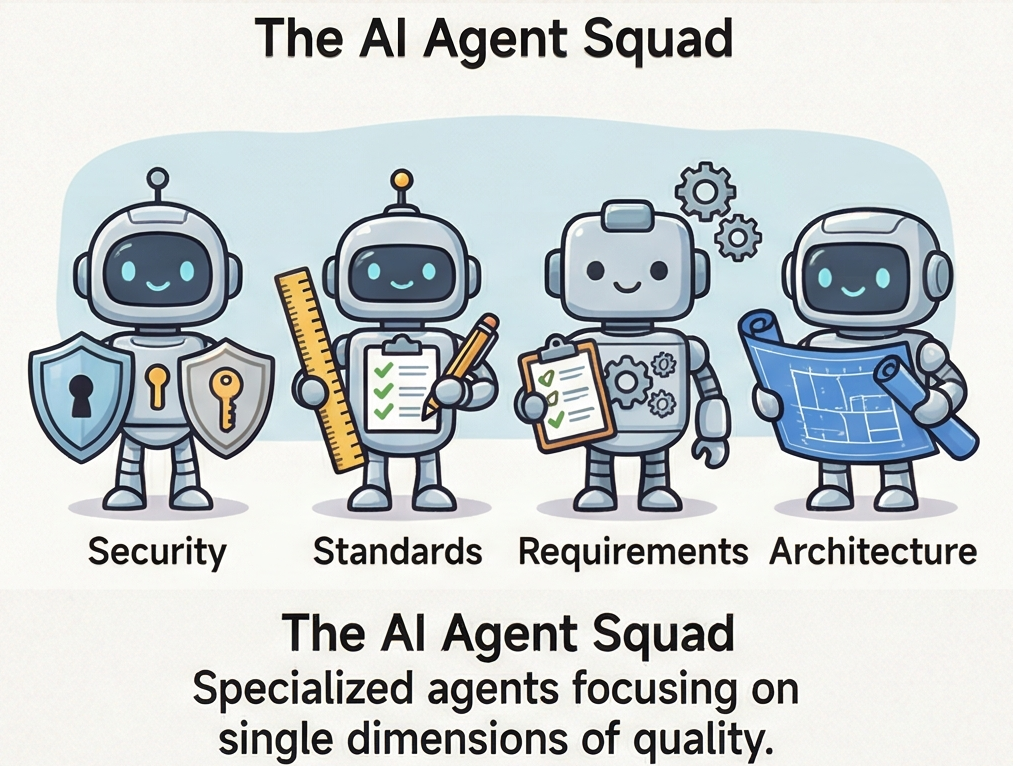

Now consider this: what if AI didn’t just write the code, but also performed every one of those verification steps? Not one AI reviewing everything in a single pass, which is just as limited as one human reviewing everything in a single pass, but multiple specialized AI agents, each focused on a single dimension of quality, running in parallel.

One agent reviews authentication flows, secret handling, and dependency risks. Another checks language-specific best practices and your company’s coding standards. Another validates test coverage against acceptance criteria. Another compares the implementation against the ticket or user story to confirm the code actually does what the team intended. Another reviews architectural boundaries, coupling, and patterns that tend to decay over time.

Each agent has its own context window loaded with only the reference material it needs. No context dilution. No attention fatigue. No “I looked at it for 15 minutes between meetings.”

This is what I’m calling SWE 3.0: the orchestrated era of software engineering. Not “AI writes code.” “AI generates, verifies, and traces code through specialized checks before humans approve risk.”

And the core insight is this: the same verification layers that would have prevented your production bugs with human-written code are the same layers that make AI-generated code trustworthy. The difference is that AI makes those layers economically feasible at a depth and consistency most teams could never afford before.

Trust is built, not assumed

The problem most people have, both engineers and leaders, is that they believe AI can one-shot a solution. That it should produce working, bug-free, spec-compliant code on the first try. In 30 years and hundreds of engineers, I have never seen a single human do this consistently. We’ve always relied on other humans checking their work. Code reviews, QA, testing, staging environments, canary deployments.

AI doesn’t change the fundamental dynamic. It just means we’re having AI check other AI’s work, with humans orchestrating the process and stepping in at defined checkpoints. The orchestration is the skill. The checkpoint design is the craft.

When I talk to CTOs and VPs who say they don’t trust AI-generated code, I ask them a simple question: how much do you trust your human-written code? Really? Because your production incident log suggests otherwise.

The answer isn’t blind trust in either case. The answer is rigorous verification, applied consistently, at a depth that was never possible when it depended entirely on human time and attention. As CodeRabbit put it: “If 2025 was the year of AI speed, 2026 will be the year of AI quality.”

What this means for your team

If you’re an engineering leader, the move isn’t to ban AI or to adopt it blindly. It’s to double down on the process discipline your team has always asked for, and use AI to implement it at scale.

Build the multi-agent review pipeline your codebase deserves. Define the human-in-the-loop checkpoints based on risk, not habit. Create the traceability chain from requirements to deployment that your regulated peers have always maintained and that your team secretly wishes they had.

Your team told you for years: “If we had more time for reviews, testing, and requirements, we wouldn’t have these issues.” They were right. AI doesn’t eliminate the need for time and judgment. It dramatically lowers the cost of applying them consistently.

Control of your codebase was always an illusion. But confidence in your codebase? That’s buildable. And for the first time, you have the tools to build it at the scale it actually requires.